Dumb Dichotomies in Ethics, Part 1: Intentions vs Consequences

1. Intro

Over the last few years, I’ve taken three distinct “intro to philosophy” classes: one in high school, and then both “Intro to Philosophy” and “Intro to Ethics” in college. If the intentions vs consequences / Kant vs Mills / deontology vs utilitarianism debate was a horse, it would have been beaten dead long ago.

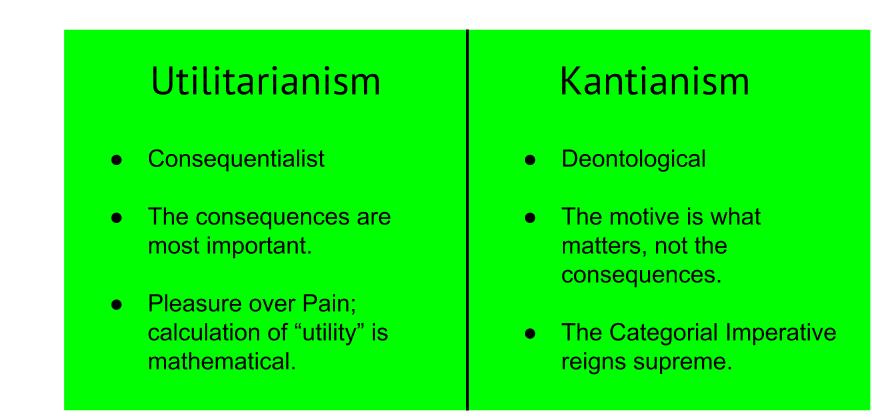

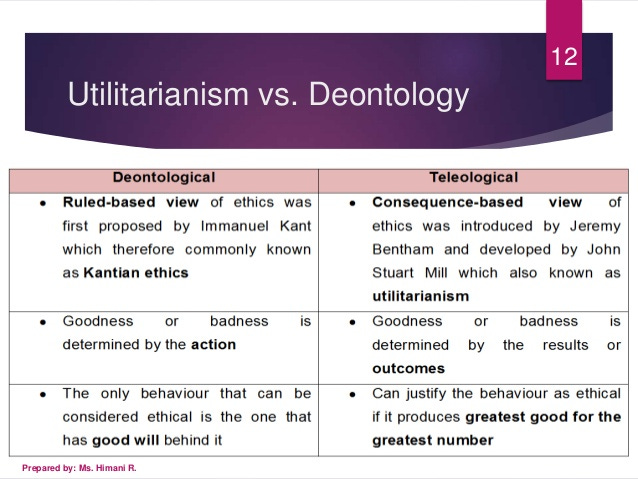

Basically every student who passes through a course that touches on ethics ends up writing a paper on it, and there is probably not a single original thought on the matter that hasn’t been said. Even still, the relationship between intentions and consequences in ethics seems woefully mistaught. Usually, it goes something something like this:

One school (usually a conflation of utilitarianism and consequentialism) says that an action is good insofar as it has good consequences.

Another school (associated with Kant and deontology) says that intentions matter. So, for example, it can matter whether you intend to kill someone or merely bring about his death as a result some other action.

Want to see for yourself? Search for “utilitarianism vs deontology” or something similar in Google Images, and you’ll get things like this:

2. Intuitions

If you’re like me, both sides sort of seem obviously true, albeit in a very different sense:

The consequences are the only thing that really matter with respect to the action in question. Like, obviously.

The intentions are the only thing that really matter with respect to the person in question. Like, obviously.

Let me flesh that out a little. If you could magically choose the precise state of the world tomorrow, it seems pretty clear that you should choose the “best” possible world (though, of course, discerning the meaning of “best” might take a few millennia). In other words, consequentialism, though not necessarily net-happiness-maximizing utilitarianism, is trivially true in a world without uncertainty.

Now, come back to the real world. Suppose John sees a puppy suffering out in the cold and brings it into his home. However, a kid playing in the snow gets distracted by John and wanders into the road, only to be hit by a car. John didn’t see the kid, and had no plausible way of knowing that the consequences of his action would be net-negative.

Intuitively, it seems pretty obvious John did the right thing. Even the most ardent utilitarian philosopher would have a hard time getting upset with John for what he did. After all, humans have no direct control over anything but their intentions. Getting practical, it seems silly to reduce John’s access to money and power, let alone put him in jail.

Putting it together

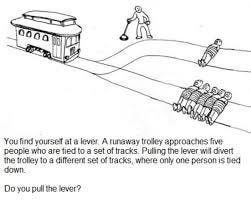

Ultimately, it isn’t too hard to reconcile these intuitions; neither “intentions” nor “consequences” matter per se. Instead, what we should care about, for all intents and purposes, is the “expected consequences” of an action.

Basically, “expected consequences” removes the importances of both “what you really want in your heart of hearts” and the arbitrary luck associated with accidentally causing harm if your decision was good ex ante.

If you know damn well that not pulling the lever will cause five people to be killed instead of one, the expected consequences don’t care that you ‘didn’t mean to do any harm.’ Likewise, no one can blame you for pulling the lever and somehow initiating a butterfly-effect chain of events that ends in a catastrophic hurricane decimating New York City. In expectation, after all, your action was net-positive.

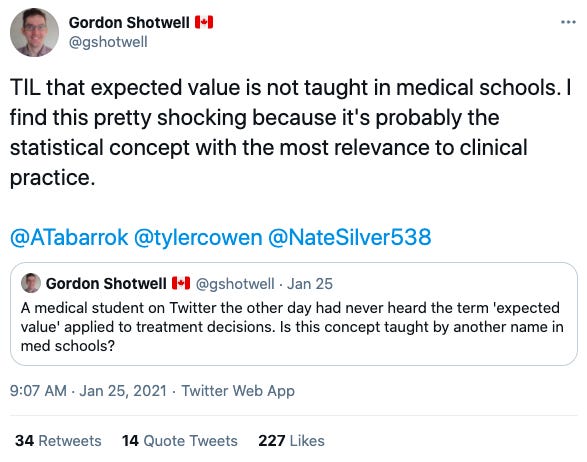

I highly doubt this is insightful or original. In fact, it’s such a simple translation of the concept of “expected value” in economics and statistics that a hundred PhDs much smarter than me have probably written papers on it. Even still, it’s remarkable that this ultrasimple compromise position seems to receive no attention in the standard ‘intro to ethics’ curriculum.

Maybe it’s not so simple…

Is it possible that philosophers just don’t know about the concept? Maybe it is so peculiar to math and econ that “expected value” hasn’t made its way into the philosophical mainstream. After learning about concept in a formal sense, in hindsight, it seems like a pretty simple and intuitive. I’m not sure I would have said the same a few years ago, though.

In fact, maybe the concept’s conspicuous absence can be explained by a combination of two, opposite forces:

Some professors/PhDs haven’t heard of the concept.

Those who have think it’s too simple and obvious to warrant discussion.

3. It Matters

To lay my cards on the table, I’m basically a utilitarian. I think we should maximize happiness and minimize suffering, and frankly am shocked that anyone takes Kant seriously.

What I fear happens, though, is that students come out of their intro class thinking that under utilitarianism, John was wrong or bad to try to save the puppy. From here, they apply completely valid reasoning to conclude that utilitarianism is wrong (or deontology is right). And, perhaps, some of these folks grow up to be bioethicists who advocate against COVID vaccine challenge trials, despite their enormous positive expected utility.

The fundamental mistake here is an excessively-narrow or hyper-theoretical understanding of utilitarianism or consequentialism. Add just a sprinkle more nuance to the mix, and it becomes clear that caring about both intentions and consequences is entirely coherent.

4. Doesn’t solve everything

Little in philosophy is simple. In fact, the whole field basically takes things you thought were simple and unwinds them until you feel like an imbecile for ever assuming you understood consciousness or free will.

“Expected consequences”, for example, leaves under-theorized when you should seek out new, relevant information to improve your forecast about some action’s consequences. However, we don’t need to resolve all its lingering ambiguities to make “expected consequences” a valid addition to the standard template of ethical systems.